Working Group 1 - Chapter 1: Historical Overview of Climate Change Science - (AR4-WG1-1)

Original at: http://www.ipcc.ch/publications_and_data/ar4/wg1/en/ch1.html

Main AR4 Index | Working Group WG1 Index | Table of Contents | Authors | Executive Summary | Annotated Text | References | Reviewer Comments

With the exception of Chapter and Section headings, all coloured text has been inserted by AccessIPCC. The non-coloured text is the IPCC original.

A number of emails from the Climate Research Unit (CRU) of the University of East Anglia were published on the Internet in November 2009. This has provided a window into the world of climate science.

We have identified a number of key individuals involved in the emails whom we have designated as Persons of Concern [PoC]; a Journal in which a PoC has published has been designated as a Journal of Concern [JoC].

This is not to suggest that we believe such papers are necessarily flawed, but rather that, as Joseph Alcamo noted at Bali in October 2009, "as policymakers and the public begin to grasp the multi-billion dollar price tag for mitigating and adapting to climate change, we should expect a sharper questioning of the science behind climate policy".

References occur in a list at the end of each chapter. Citations are within the normal text of sections and paragraphs.

| Tag | Explanation | Where Used | References | Citations |

|---|---|---|---|---|

| PoC |

Person of Concern Key individual involved in CRU emails as defined in this spreadsheet. |

References, Citations, IPCC Roles | 13 | 11 |

| JoC |

Journal of Concern A Journal which has published articles by one or more PoCs (Person of Concern) |

References, Citations | 157 | 176 |

| MoS |

Model or Simulation Reference appears to be a model or simulation, not observation or experiment |

References, Citations | 57 | 67 |

| NPR |

Non Peer Reviewed Reference has no Journal or no Volume or no Pages or it has Editors. |

References, Citations | 62 | 79 |

| SRC |

Self Reference Concern Author of a chapter containing references to own work. |

References, Citations, IPCC Roles | 32 | 28 |

| ARC |

Paper authored or co-authored by person who is also in list of Authors of another chapter. |

References, Citations | 52 | 56 |

| 2007 |

Paper dated 2007, when IPCC policy stated cutoff was December 2005 |

References, Citations | - | - |

| Ambiguous |

The short inline citation matched with more than one reference; however, AccessIPCC will link to the first reference found. |

Citations | - | 1 |

| NotFound |

The short inline citation was not matched with any reference. Believed to be caused by typing errors. |

Citations | - | - |

| Clean |

The reference was probably peer reviewed. |

References, Citations | 35 | 33 |

Coordinating Lead Authors:

Hervé Le Treut (France) [SRC:2], Richard Somerville (USA) [SRC:2],

| Concern | Occurrence |

|---|---|

| SRC 1-4 | 2 |

| Potentially Biased Authors | 2 |

Lead Authors:

Ulrich Cubasch (Germany) [SRC:4], Yihui Ding (China), Cecilie Mauritzen (Norway), Abdalah Mokssit (Morocco), Thomas Peterson (USA) [SRC:2], Michael Prather (USA) [SRC:1],

| Concern | Occurrence |

|---|---|

| SRC 1-4 | 3 |

| Potentially Biased Authors | 3 |

| Impartial Authors | 3 |

Contributing Authors:

M. Allen (UK) [SRC:1], I. Auer (Austria), J. Biercamp (Germany) [SRC:1], C. Covey (USA), J.R. Fleming (USA) [SRC:1], R. García-Herrera (Spain), P. Gleckler (USA), J. Haigh (UK) [SRC:1], G.C. Hegerl (USA; Germany) [SRC:3], K. Isaksen (Norway), J. Jones (Germany; UK), J. Luterbacher (Switzerland) [SRC:2], M. MacCracken (USA), J.E. Penner (USA) [SRC:1], C. Pfister (Switzerland) [SRC:1], E. Roeckner (Germany) [SRC:1], B. Santer (USA) [SRC:4][PoC], , F. Schott (Germany), F. Sirocko (Germany), A. Staniforth (UK), T.F. Stocker (Switzerland) [SRC:1], R.J. Stouffer (USA) [SRC:2], K.E. Taylor (USA) [SRC:1], K.E. Trenberth (USA) [SRC:2][PoC], , A. Weisheimer (ECMWF; Germany), M. Widmann (Germany; UK),

| Concern | Occurrence |

|---|---|

| PoC | 2 |

| SRC 1-4 | 14 |

| Potentially Biased Authors | 14 |

| Impartial Authors | 12 |

Review Editors:

Alphonsus Baede (Netherlands), David Griggs (UK),

| Concern | Occurrence |

|---|---|

| Impartial Authors | 2 |

This chapter should be cited as:

Le Treut, H., R. Somerville, U. Cubasch, Y. Ding, C. Mauritzen, A. Mokssit, T. Peterson and M. Prather, 2007: Historical Overview of Climate Change. In: Climate Change 2007: The Physical Science Basis. Contribution of Working Group I to the Fourth Assessment Report of the Intergovernmental Panel on Climate Change [Solomon, S., D. Qin, M. Manning, Z. Chen, M. Marquis, K.B. Averyt, M. Tignor and H.L. Miller (eds.)]. Cambridge University Press, Cambridge, United Kingdom and New York, NY, USA.

Executive Summary

Awareness and a partial understanding of most of the interactive processes in the Earth system that govern climate and climate change predate the IPCC, often by many decades. A deeper understanding and quantification of these processes and their incorporation in climate models have progressed rapidly since the IPCC First Assessment Report in 1990 .

As climate science and the Earth’s climate have continued to evolve over recent decades, increasing evidence of anthropogenic influences on climate change has been found. Correspondingly, the IPCC has made increasingly more definitive statements about human impacts on climate.

Debate has stimulated a wide variety of climate change research. The results of this research have refined but not significantly redirected the main scientific conclusions from the sequence of IPCC assessments.

1.1 Overview of the Chapter

To better understand the science assessed in this Fourth Assessment Report (AR4), it is helpful to review the long historical perspective that has led to the current state of climate change knowledge. This chapter starts by describing the fundamental nature of earth science. It then describes the history of climate change science using a wide-ranging subset of examples, and ends with a history of the IPCC.

The concept of this chapter is new. There is no counterpart in previous IPCC assessment reports for an introductory chapter providing historical context for the remainder of the report. Here, a restricted set of topics has been selected to illustrate key accomplishments and challenges in climate change science. The topics have been chosen for their significance to the IPCC task of assessing information relevant for understanding the risks of human-induced climate change, and also to illustrate the complex and uneven pace of scientific progress.

In this chapter, the time frame under consideration stops with the publication of the Third Assessment Report (TAR; IPCC, 2001a [NPR] ). Developments subsequent to the TAR are described in the other chapters of this report, and we refer to these chapters throughout this first chapter.

1.2 The Nature of Earth Science

Science may be stimulated by argument and debate, but it generally advances through formulating hypotheses clearly and testing them objectively. This testing is the key to science. In fact, one philosopher of science insisted that to be genuinely scientific, a statement must be susceptible to testing that could potentially show it to be false ( Popper, 1934 [NPR] ). In practice, contemporary scientists usually submit their research findings to the scrutiny of their peers, which includes disclosing the methods that they use, so their results can be checked through replication by other scientists. The insights and research results of individual scientists, even scientists of unquestioned genius, are thus confirmed or rejected in the peer-reviewed literature by the combined efforts of many other scientists. It is not the belief or opinion of the scientists that is important, but rather the results of this testing. Indeed, when Albert Einstein was informed of the publication of a book entitled 100 Authors Against Einstein,he is said to have remarked, ‘If I were wrong, then one would have been enough!’ ( Hawking, 1988 [NPR] ); however, that one opposing scientist would have needed proof in the form of testable results.

Thus science is inherently self-correcting; incorrect or incomplete scientific concepts ultimately do not survive repeated testing against observations of nature. Scientific theories are ways of explaining phenomena and providing insights that can be evaluated by comparison with physical reality. Each successful prediction adds to the weight of evidence supporting the theory, and any unsuccessful prediction demonstrates that the underlying theory is imperfect and requires improvement or abandonment. Sometimes, only certain kinds of questions tend to be asked about a scientific phenomenon until contradictions build to a point where a sudden change of paradigm takes place ( Kuhn, 1996 [NPR] ). At that point, an entire field can be rapidly reconstructed under the new paradigm.

Despite occasional major paradigm shifts, the majority of scientific insights, even unexpected insights, tend to emerge incrementally as a result of repeated attempts to test hypotheses as thoroughly as possible. Therefore, because almost every new advance is based on the research and understanding that has gone before, science is cumulative, with useful features retained and non-useful features abandoned. Active research scientists, throughout their careers, typically spend large fractions of their working time studying in depth what other scientists have done. Superficial or amateurish acquaintance with the current state of a scientific research topic is an obstacle to a scientist’s progress. Working scientists know that a day in the library can save a year in the laboratory. Even Sir Isaac Newton 1675 [NPR] ) wrote that if he had ‘seen further it is by standing on the shoulders of giants’. Intellectual honesty and professional ethics call for scientists to acknowledge the work of predecessors and colleagues.

The attributes of science briefly described here can be used in assessing competing assertions about climate change. Can the statement under consideration, in principle, be proven false? Has it been rigorously tested? Did it appear in the peer-reviewed literature? Did it build on the existing research record where appropriate? If the answer to any of these questions is no, then less credence should be given to the assertion until it is tested and independently verified. The IPCC assesses the scientific literature to create a report based on the best available science( Section 1.6 ). It must be acknowledged, however, that the IPCC also contributes to science by identifying the key uncertainties and by stimulating and coordinating targeted research to answer important climate change questions.

Acharacteristic of Earth sciences is that Earth scientists are unable to perform controlled experiments on the planet as a whole and then observe the results. In this sense, Earth science is similar to the disciplines of astronomy and cosmology that cannot conduct experiments on galaxies or the cosmos. This is an important consideration, because it is precisely such whole-Earth, system-scale experiments, incorporating the full complexity of interacting processes and feedbacks, that might ideally be required to fully verify or falsify climate change hypotheses ( Schellnhuber et al., 2004 [NPR] ). Nevertheless, countless empirical tests of numerous different hypotheses have built up a massive body of Earth science knowledge. This repeated testing has refined the understanding of numerous aspects of the climate system, from deep oceanic circulation to stratospheric chemistry. Sometimes a combination of observations and models can be used to test planetary-scale hypotheses. For example, the global cooling and drying of the atmosphere observed after the eruption of Mt. Pinatubo( Section 8.6 )provided key tests of particular aspects of global climate models ( Hansen et al., 1992 [JoC, ARC] ).

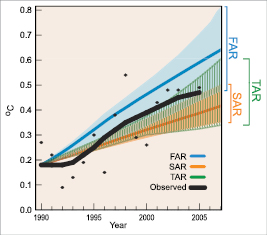

Another example is provided by past IPCC projections of future climate change compared to current observations. Figure 1.1 reveals that the model projections of global average temperature from the First Assessment Report (FAR; IPCC, 1990 [NPR] ) were higher than those from the Second Assessment Report (SAR; IPCC, 1996 [NPR] ). Subsequent observations( Section 3.2 )showed that the evolution of the actual climate system fell midway between the FAR and the SAR ‘best estimate’ projections and were within or near the upper range of projections from the TAR ( IPCC, 2001a [NPR] ).

Figure 1.1. Yearly global average surface temperature ( Brohan et al., 2006 [JoC, MoS] ), relative to the mean 1961 to 1990 values, and as projected in the FAR ( IPCC, 1990 [NPR] ), SAR ( IPCC, 1996 [NPR] ) and TAR ( IPCC, 2001a [NPR] ). The ‘best estimate’ model projections from the FAR and SAR are in solid lines with their range of estimated projections shown by the shaded areas. The TAR did not have ‘best estimate’ model projections but rather a range of projections. Annual mean observations( Section 3.2 )are depicted by black circles and the thick black line shows decadal variations obtained by smoothing the time series using a 13-point filter.

Not all theories or early results are verified by later analysis. In the mid- 1970 s, several articles about possible global cooling appeared in the popular press, primarily motivated by analyses indicating that Northern Hemisphere (NH) temperatures had decreased during the previous three decades (e.g., ( Gwynne, 1975 ) ). In the peer-reviewed literature, a paper by Bryson and Dittberner 1976 [JoC, MoS] ) reported that increases in carbon dioxide (CO2)should be associated with a decrease in global temperatures. When challenged by Woronko 1977 [JoC, MoS] Bryson and Dittberner 1977 [JoC] ) explained that the cooling projected by their model was due to aerosols (small particles in the atmosphere) produced by the same combustion that caused the increase in CO2.However, because aerosols remain in the atmosphere only a short time compared to CO2,the results were not applicable for long-term climate change projections. This example of a prediction of global cooling is a classic illustration of the self-correcting nature of Earth science. The scientists involved were reputable researchers who followed the accepted paradigm of publishing in scientific journals, submitting their methods and results to the scrutiny of their peers (although the peer-review did not catch this problem), and responding to legitimate criticism.

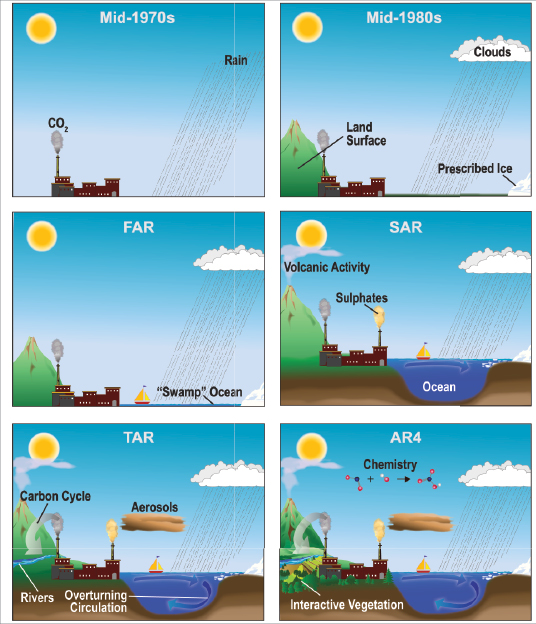

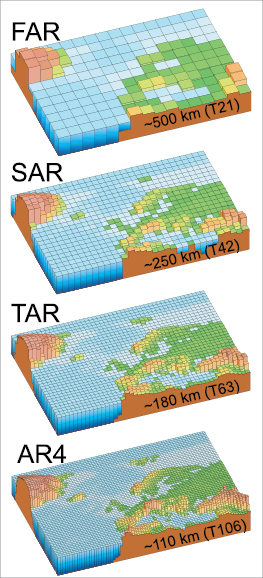

Arecurring theme throughout this chapter is that climate science in recent decades has been characterised by the increasing rate of advancement of research in the field and by the notable evolution of scientific methodology and tools, including the models and observations that support and enable the research. During the last four decades, the rate at which scientists have added to the body of knowledge of atmospheric and oceanic processes has accelerated dramatically. As scientists incrementally increase the totality of knowledge, they publish their results in peer-reviewed journals. Between 1965 and 1995, the number of articles published per year in atmospheric science journals tripled ( Geerts, 1999 [JoC] ). Focusing more narrowly, Stanhill 2001 [JoC] ) found that the climate change science literature grew approximately exponentially with a doubling time of 11 years for the period 1951 to 1997 . Furthermore, 95% of all the climate change science literature since 1834 was published after 1951 . Because science is cumulative, this represents considerable growth in the knowledge of climate processes and in the complexity of climate research. An important example of this is the additional physics incorporated in climate models over the last several decades, as illustrated in Figure 1.2 .As a result of the cumulative nature of science, climate science today is an interdisciplinary synthesis of countless tested and proven physical processes and principles painstakingly compiled and verified over several centuries of detailed laboratory measurements, observational experiments and theoretical analyses; and is now far more wide-ranging and physically comprehensive than was the case only a few decades ago.

1.3 Examples of Progress in Detecting and Attributing Recent Climate Change

1.3.1 The Human Fingerprint on Greenhouse Gases

The high-accuracy measurements of atmospheric CO2 concentration, initiated by Charles David Keeling in 1958, constitute the master time series documenting the changing composition of the atmosphere ( Keeling, 1961 [ARC] , 1998 ). These data have iconic status in climate change science as evidence of the effect of human activities on the chemical composition of the global atmosphere (see FAQ 7.1 ). Keeling’s measurements on Mauna Loa in Hawaii provide a true measure of the global carbon cycle, an effectively continuous record of the burning of fossil fuel. They also maintain an accuracy and precision that allow scientists to separate fossil fuel emissions from those due to the natural annual cycle of the biosphere, demonstrating a long-term change in the seasonal exchange of CO2 between the atmosphere, biosphere and ocean. Later observations of parallel trends in the atmospheric abundances of the 13CO2 isotope ( Francey and Farquhar, 1982 [JoC] ) and molecular oxygen (O2 )(Keeling and Shertz, 1992 [JoC, ARC] ; Bender et al., 1996 [JoC] ) uniquely identified this rise in CO2 with fossil fuel burning (Sections 2.3 , 7.1 and 7.3 ).

To place the increase in CO2 abundance since the late 1950 s in perspective, and to compare the magnitude of the anthropogenic increase with natural cycles in the past, a longer-term record of CO2 and other natural greenhouse gases is needed. These data came from analysis of the composition of air enclosed in bubbles in ice cores from Greenland and Antarctica. The initial measurements demonstrated that CO2 abundances were significantly lower during the last ice age than over the last 10 kyr of the Holocene ( Delmas et al., 1980 [JoC] ;( Berner et al., 1980; ) Neftel et al., 1982 [JoC] ). From 10 kyr before present up to the year 1750, CO2 abundances stayed within the range 280 ± 20 ppm ( Indermühle et al., 1999 [JoC] ). During the industrial era, CO2 abundance rose roughly exponentially to 367 ppm in 1999 ( Neftel et al., 1985 [JoC] ; Etheridge et al., 1996 [JoC, ARC] ; IPCC, 2001a [NPR] ) and to 379 ppm in 2005 ( Section 2.3.1 ;see also Section 6.4 ).

Direct atmospheric measurements since 1970 ( Steele et al., 1996 [NPR] ) have also detected the increasing atmospheric abundances of two other major greenhouse gases, methane (CH4)and nitrous oxide (N2O). Methane abundances were initially increasing at a rate of about 1% yr–1 ( Graedel and McRae, 1980 [JoC] ; Fraser et al., 1981 [JoC, ARC] ; Blake et al., 1982 [JoC] ) but then slowed to an average increase of 0.4% yr–1 over the 1990 s ( Dlugokencky et al., 1998 [JoC, ARC] ) with the possible stabilisation of CH4 abundance( Section 2.3.2 ). The increase inN2Oabundance is smaller, about 0.25% yr–1,and more difficult to detect ( Weiss, 1981 [JoC, ARC] ;( Khalil and Rasmussen, 1988 ) ). To go back in time, measurements were made from firn air trapped in snowpack dating back over 200 years, and these data show an accelerating rise in both CH4 andN2Ointo the 20th century ( Machida et al., 1995 [JoC] ; Battle et al., 1996 [JoC] ). When ice core measurements extended the CH4 abundance back 1 kyr, they showed a stable, relatively constant abundance of 700 ppb until the 19th century when a steady increase brought CH4 abundances to 1,745 ppb in 1998 ( IPCC, 2001a [NPR] ) and 1,774 ppb in 2005 ( Section 2.3.2 ). This peak abundance is much higher than the range of 400 to 700 ppb seen over the last half-million years of glacial-interglacial cycles, and the increase can be readily explained by anthropogenic emissions. ForN2Othe results are similar: the relative increase over the industrial era is smaller (15%), yet the 1998 abundance of 314 ppb ( IPCC, 2001a [NPR] ), rising to 319 ppb in 2005 ( Section 2.3.3 ), is also well above the 180-to-260 ppb range of glacial-interglacial cycles ( Flückiger et al., 1999 [JoC] ; see Sections 2.3 , 6.2 , 6.3 , 6.4 , 7.1 and 7.4 )

Several synthetic halocarbons (chlorofluorocarbons (CFCs), hydrofluorocarbons, perfluorocarbons, halons and sulphur hexafluoride) are greenhouse gases with large global warming potentials (GWPs; Section 2.10 ). The chemical industry has been producing these gases and they have been leaking into the atmosphere since about 1930 Lovelock 1971 [JoC] ) first measured CFC-11 (CFCl3)in the atmosphere, noting that it could serve as an artificial tracer, with its north-south gradient reflecting the latitudinal distribution of anthropogenic emissions. Atmospheric abundances of all the synthetic halocarbons were increasing until the 1990 s, when the abundance of halocarbons phased out under the Montreal Protocol began to fall ( Montzka et al., 1999 [JoC, MoS, ARC] ; Prinn et al., 2000 [JoC, ARC] ). In the case of synthetic halocarbons (except perfluoromethane), ice core research has shown that these compounds did not exist in ancient air ( Langenfelds et al., 1996 [NPR] ) and thus confirms their industrial human origin (see Sections 2.3 and 7.1 ).

At the time of the TAR scientists could say that the abundances of all the well-mixed greenhouse gases during the 1990 s were greater than at any time during the last half-million years ( Petit et al, 1999 [JoC] ), and this record now extends back nearly one million years( Section 6.3 ). Given this daunting picture of increasing greenhouse gas abundances in the atmosphere, it is noteworthy that, for simpler challenges but still on a hemispheric or even global scale, humans have shown the ability to undo what they have done. Sulphate pollution in Greenland was reversed in the 1980 s with the control of acid rain in North America and Europe ( IPCC, 2001b [NPR] ), and CFC abundances are declining globally because of their phase-out undertaken to protect the ozone layer.

1.3.2 Global Surface Temperature

Shortly after the invention of the thermometer in the early 1600 s, efforts began to quantify and record the weather. The first meteorological network was formed in northern Italy in 1653 ( Kington, 1988 [NPR] ) and reports of temperature observations were published in the earliest scientific journals (e.g., Wallis and Beale, 1669 [NPR] ). By the latter part of the 19th century, systematic observations of the weather were being made in almost all inhabited areas of the world. Formal international coordination of meteorological observations from ships commenced in 1853 ( (Quetelet, 1854 ) ).

Inspired by the paper Suggestions on a Uniform System of Meteorological Observations (Buys-Ballot, 1872 ), the International Meteorological Organization (IMO) was formed in 1873 . Its successor, the World Meteorological Organization (WMO), still works to promote and exchange standardised meteorological observations. Yet even with uniform observations, there are still four major obstacles to turning instrumental observations into accurate global time series: (1) access to the data in usable form; (2) quality control to remove or edit erroneous data points; (3) homogeneity assessments and adjustments where necessary to ensure the fidelity of the data; and (4) area-averaging in the presence of substantial gaps.

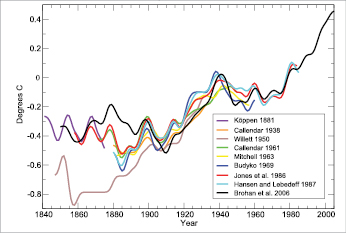

( Köppen 1873, ) 1880, 1881 ) was the first scientist to overcome most of these obstacles in his quest to study the effect of changes in sunspots( Section 2.7 ). Much of his data came from ( Dove 1852 ) ), but wherever possible he used data directly from the original source, because Dove often lacked information about the observing methods. Köppen considered examination of the annual mean temperature to be an adequate technique for quality control of far distant stations. Using data from more than 100 stations, Köppen averaged annual observations into several major latitude belts and then area-averaged these into a near-global time series shown in Figure 1.3 .

Figure 1.3. Published records of surface temperature change over large regions. ( Köppen 1881 ) ) tropics and temperate latitudes using land air temperature. Callendar 1938 [JoC] ) global using land stations. Willett 1950 [NPR] ) global using land stations. Callendar 1961 [JoC] ) 60°N to 60°S using land stations. Mitchell 1963 [NPR, ARC] ) global using land stations. Budyko 1969 [JoC] ) Northern Hemisphere using land stations and ship reports. Jones et al. 1986a [PoC, JoC, ARC] ,b) global using land stations. Hansen and Lebedeff 1987 [JoC, ARC] ) global using land stations. Brohan et al. 2006 [JoC, MoS] ) global using land air temperature and sea surface temperature data is the longest of the currently updated global temperature time series( Section 3.2 ). All time series were smoothed using a 13-point filter. The Brohan et al. 2006 [JoC, MoS] ) time series are anomalies from the 1961 to 1990 mean (°C). Each of the other time series was originally presented as anomalies from the mean temperature of a specific and differing base period. To make them comparable, the other time series have been adjusted to have the mean of their last 30 years identical to that same period in the Brohan et al. 2006 [JoC, MoS] ) anomaly time series.

Callendar 1938 [JoC] ) produced the next global temperature time series expressly to investigate the influence of CO2 on temperature( Section 2.3 ). Callendar examined about 200 station records. Only a small portion of them were deemed defective, based on quality concerns determined by comparing differences with neighbouring stations or on homogeneity concerns based on station changes documented in the recorded metadata. After further removing two arctic stations because he had no compensating stations from the antarctic region, he created a global average using data from 147 stations.

Most of Callendar’s data came from World Weather Records (WWR; Clayton, 1927 [NPR] ). Initiated by a resolution at the 1923 IMO Conference, WWR was a monumental international undertaking producing a 1,196-page volume of monthly temperature, precipitation and pressure data from hundreds of stations around the world, some with data starting in the early 1800 s. In the early 1960 s, J. Wolbach had these data digitised (National Climatic Data Center, 2002 ). The WWR project continues today under the auspices of the WMO with the digital publication of decadal updates to the climate records for thousands of stations worldwide (National Climatic Data Center, 2005 ).

Willett 1950 [NPR] ) also used WWR as the main source of data for 129 stations that he used to create a global temperature time series going back to 1845 . While the resolution that initiated WWR called for the publication of long and homogeneous records, Willett took this mandate one step further by carefully selecting a subset of stations with as continuous and homogeneous a record as possible from the most recent update of WWR, which included data through 1940 . To avoid over-weighting certain areas such as Europe, only one record, the best available, was included from each 10° latitude and longitude square. Station monthly data were averaged into five-year periods and then converted to anomalies with respect to the five-year period 1935 to 1939 . Each station’s anomaly was given equal weight to create the global time series.

Callendar in turn created a new near-global temperature time series in 1961 and cited Willett 1950 [NPR] ) as a guide for some of his improvements. Callendar 1961 [JoC] ) evaluated 600 stations with about three-quarters of them passing his quality checks. Unbeknownst to Callendar, a former student of Willett Mitchell 1963 [NPR, ARC] ), in work first presented in 1961, had created his own updated global temperature time series using slightly fewer than 200 stations and averaging the data into latitude bands. Landsberg and Mitchell 1961 [JoC, ARC] ) compared Callendar’s results with Mitchell’s and stated that there was generally good agreement except in the data-sparse regions of the Southern Hemisphere.

Meanwhile, research in Russia was proceeding on a very different method to produce large-scale time series. Budyko 1969 [JoC] ) used smoothed, hand-drawn maps of monthly temperature anomalies as a starting point. While restricted to analysis of the NH, this map-based approach not only allowed the inclusion of an increasing number of stations over time (e.g., 246 in 1881, 753 in 1913, 976 in 1940 and about 2,000 in 1960 ) but also the utilisation of data over the oceans ( Robock, 1982 [JoC] ).

Increasing the number of stations utilised has been a continuing theme over the last several decades with considerable effort being spent digitising historical station data as well as addressing the continuing problem of acquiring up-to-date data, as there can be a long lag between making an observation and the data getting into global data sets. During the 1970 s and 1980 s, several teams produced global temperature time series. Advances especially worth noting during this period include the extended spatial interpolation and station averaging technique of Hansen and Lebedeff 1987 [JoC, ARC] ) and the Jones et al. 1986a [PoC, JoC, ARC] ,b) painstaking assessment of homogeneity and adjustments to account for discontinuities in the record of each of the thousands of stations in a global data set. Since then, global and national data sets have been rigorously adjusted for homogeneity using a variety of statistical and metadata-based approaches ( Peterson et al., 1998 [JoC, SRC] ).

One recurring homogeneity concern is potential urban heat island contamination in global temperature time series. This concern has been addressed in two ways. The first is by adjusting the temperature of urban stations to account for assessed urban heat island effects (e.g., Karl et al., 1988 [PoC, JoC, ARC] ; Hansen et al., 2001 [JoC, ARC] ). The second is by performing analyses that, like Callendar 1938 [JoC] ), indicate that the bias induced by urban heat islands in the global temperature time series is either minor or non-existent ( Jones et al., 1990 [JoC, ARC] ; Peterson et al., 1999 [JoC, SRC] ).

As the importance of ocean data became increasingly recognised, a major effort was initiated to seek out, digitise and quality-control historical archives of ocean data. This work has since grown into the International Comprehensive Ocean-Atmosphere Data Set (ICOADS; Worley et al., 2005 [JoC] ), which has coordinated the acquisition, digitisation and synthesis of data ranging from transmissions by Japanese merchant ships to the logbooks of South African whaling boats. The amount of sea surface temperature (SST) and related data acquired continues to grow.

As fundamental as the basic data work of ICOADS was, there have been two other major advances in SST data. The first was adjusting the early observations to make them comparable to current observations( Section 3.2 ). Prior to 1940, the majority of SST observations were made from ships by hauling a bucket on deck filled with surface water and placing a thermometer in it. This ancient method eventually gave way to thermometers placed in engine cooling water inlets, which are typically located several metres below the ocean surface. Folland and Parker 1995 [PoC, JoC, ARC] ) developed an adjustment model that accounted for heat loss from the buckets and that varied with bucket size and type, exposure to solar radiation, ambient wind speed and ship speed. They verified their results using time series of night marine air temperature. This adjusted the early bucket observations upwards by a few tenths of a degree celsius.

Most of the ship observations are taken in narrow shipping lanes, so the second advance has been increasing global coverage in a variety of ways. Direct improvement of coverage has been achieved by the internationally coordinated placement of drifting and moored buoys. The buoys began to be numerous enough to make significant contributions to SST analyses in the mid- 1980 s ( McPhaden et al., 1998 [JoC, ARC] ) and have subsequently increased to more than 1,000 buoys transmitting data at any one time. Since 1982, satellite data, anchored to in situ observations, have contributed to near-global coverage ( Reynolds and Smith, 1994 [JoC] ). In addition, several different approaches have been used to interpolate and combine land and ocean observations into the current global temperature time series( Section 3.2 ). To place the current instrumental observations into a longer historical context requires the use of proxy data( Section 6.2 ).

Figure 1.3 depicts several historical ‘global’ temperature time series, together with the longest of the current global temperature time series, that of Brohan et al. 2006 [JoC, MoS] ; Section 3.2). While the data and the analysis techniques have changed over time, all the time series show a high degree of consistency since 1900 . The differences caused by using alternate data sources and interpolation techniques increase when the data are sparser. This phenomenon is especially illustrated by the pre- 1880 values of Willett’s ( 1950 ) time series. Willett noted that his data coverage remained fairly constant after 1885 but dropped off dramatically before that time to only 11 stations before 1850 . The high degree of agreement between the time series resulting from these many different analyses increases the confidence that the changes they are indicating are real.

Despite the fact that many recent observations are automatic, the vast majority of data that go into global surface temperature calculations – over 400 million individual readings of thermometers at land stations and over 140 million individual in situ SST observations – have depended on the dedication of tens of thousands of individuals for well over a century. Climate science owes a great debt to the work of these individual weather observers as well as to international organisations such as the IMO, WMO and the Global Climate Observing System, which encourage the taking and sharing of high-quality meteorological observations. While modern researchers and their institutions put a great deal of time and effort into acquiring and adjusting the data to account for all known problems and biases, century-scale global temperature time series would not have been possible without the conscientious work of individuals and organisations worldwide dedicated to quantifying and documenting their local environment( Section 3.2 ).

1.3.3 Detection and Attribution

Using knowledge of past climates to qualify the nature of ongoing changes has become a concern of growing importance during the last decades, as reflected in the successive IPCC reports. While linked together at a technical level, detection and attribution have separate objectives. Detection of climate change is the process of demonstrating that climate has changed in some defined statistical sense, without providing a reason for that change. Attribution of causes of climate change is the process of establishing the most likely causes for the detected change with some defined level of confidence. Using traditional approaches, unequivocal attribution would require controlled experimentation with our climate system. However, with no spare Earth with which to experiment, attribution of anthropogenic climate change must be pursued by: (a) detecting that the climate has changed (as defined above); (b) demonstrating that the detected change is consistent with computer model simulations of the climate change ‘signal’ that is calculated to occur in response to anthropogenic forcing; and (c) demonstrating that the detected change is not consistent with alternative, physically plausible explanations of recent climate change that exclude important anthropogenic forcings.

Both detection and attribution rely on observational data and model output. In spite of the efforts described in Section 1.3.2 ,estimates of century-scale natural climate fluctuations remain difficult to obtain directly from observations due to the relatively short length of most observational records and a lack of understanding of the full range and effects of the various and ongoing external influences. Model simulations with no changes in external forcing (e.g., no increases in atmospheric CO2 concentration) provide valuable information on the natural internal variability of the climate system on time scales of years to centuries. Attribution, on the other hand, requires output from model runs that incorporate historical estimates of changes in key anthropogenic and natural forcings, such as well-mixed greenhouse gases, volcanic aerosols and solar irradiance. These simulations can be performed with changes in a single forcing only (which helps to isolate the climate effect of that forcing), or with simultaneous changes in a whole suite of forcings.

In the early years of detection and attribution research, the focus was on a single time series – the estimated global-mean changes in the Earth’s surface temperature. While it was not possible to detect anthropogenic warming in 1980, Madden and Ramanathan 1980 [JoC, ARC] and Hansen et al. 1981 [JoC, ARC] ) predicted it would be evident at least within the next two decades. A decade later, Wigley and Raper 1990 [PoC, JoC, ARC] ) used a simple energy-balance climate model to show that the observed change in global-mean surface temperature from 1867 to 1982 could not be explained by natural internal variability. This finding was later confirmed using variability estimates from more complex coupled ocean-atmosphere general circulation models (e.g., Stouffer et al., 1994 [JoC, MoS, SRC] ).

As the science of climate change progressed, detection and attribution research ventured into more sophisticated statistical analyses that examined complex patterns of climate change. Climate change patterns or ‘fingerprints’ were no longer limited to a single variable (temperature) or to the Earth’s surface. More recent detection and attribution work has made use of precipitation and global pressure patterns, and analysis of vertical profiles of temperature change in the ocean and atmosphere. Studies with multiple variables make it easier to address attribution issues. While two different climate forcings may yield similar changes in global mean temperature, it is highly unlikely that they will produce exactly the same ‘fingerprint’ (i.e., climate changes that are identical as a function of latitude, longitude, height, season and history over the 20th century).

Such model-predicted fingerprints of anthropogenic climate change are clearly statistically identifiable in observed data. The common conclusion of a wide range of fingerprint studies conducted over the past 15 years is that observed climate changes cannot be explained by natural factors alone ( Santer et al., 1995 [PoC, JoC, SRC] , 1996a, b,c; Hegerl et al., 1996 [JoC, SRC] , 1997, 2000; Hasselmann, 1997 [JoC] ; Barnett et al., 1999 [JoC] ; Tett et al., 1999 [JoC, ARC] ; Stott et al., 2000 [JoC, MoS, ARC] ). A substantial anthropogenic influence is required in order to best explain the observed changes. The evidence from this body of work strengthens the scientific case for a discernible human influence on global climate.

1.4 Examples of Progress in Understanding Climate Processes

1.4.1 The Earth’s Greenhouse Effect

The realisation that Earth’s climate might be sensitive to the atmospheric concentrations of gases that create a greenhouse effect is more than a century old. Fleming 1998 [NPR, SRC] and Weart 2003 [NPR] ) provided an overview of the emerging science. In terms of the energy balance of the climate system, Edme Mariotte noted in 1681 that although the Sun’s light and heat easily pass through glass and other transparent materials, heat from other sources(chaleur de feu)does not. The ability to generate an artificial warming of the Earth’s surface was demonstrated in simple greenhouse experiments such as Horace Benedict de Saussure’s experiments in the 1760 s using a ‘heliothermometer’ (panes of glass covering a thermometer in a darkened box) to provide an early analogy to the greenhouse effect. It was a conceptual leap to recognise that the air itself could also trap thermal radiation. In 1824, Joseph Fourier, citing Saussure, argued ‘the temperature [of the Earth] can be augmented by the interposition of the atmosphere, because heat in the state of light finds less resistance in penetrating the air, than in repassing into the air when converted into non-luminous heat’. In 1836, Pouillit followed up on Fourier’s ideas and argued ‘the atmospheric stratum…exercises a greater absorption upon the terrestrial than on the solar rays’. There was still no understanding of exactly what substance in the atmosphere was responsible for this absorption.

In 1859, John( Tyndall 1861 ) ) identified through laboratory experiments the absorption of thermal radiation by complex molecules (as opposed to the primary bimolecular atmospheric constituentsO2 and molecular nitrogen). He noted that changes in the amount of any of the radiatively active constituents of the atmosphere such as water (H2O) or CO2 could have produced ‘all the mutations of climate which the researches of geologists reveal’. In 1895, Svante ( Arrhenius 1896 ) ) followed with a climate prediction based on greenhouse gases, suggesting that a 40% increase or decrease in the atmospheric abundance of the trace gas CO2 might trigger the glacial advances and retreats. One hundred years later, it would be found that CO2 did indeed vary by this amount between glacial and interglacial periods. However, it now appears that the initial climatic change preceded the change in CO2 but was enhanced by it( Section 6.4 ).

G. S. Callendar 1938 [JoC] ) solved a set of equations linking greenhouse gases and climate change. He found that a doubling of atmospheric CO2 concentration resulted in an increase in the mean global temperature of 2°C, with considerably more warming at the poles, and linked increasing fossil fuel combustion with a rise in CO2 and its greenhouse effects: ‘As man is now changing the composition of the atmosphere at a rate which must be very exceptional on the geological time scale, it is natural to seek for the probable effects of such a change. From the best laboratory observations it appears that the principal result of increasing atmospheric carbon dioxide…would be a gradual increase in the mean temperature of the colder regions of the Earth.’ In 1947, Ahlmann reported a 1.3°C warming in the North Atlantic sector of the Arctic since the 19th century and mistakenly believed this climate variation could be explained entirely by greenhouse gas warming. Similar model predictions were echoed by Plass in 1956 (see Fleming, 1998 [NPR, SRC] ): ‘If at the end of this century, measurements show that the carbon dioxide content of the atmosphere has risen appreciably and at the same time the temperature has continued to rise throughout the world, it will be firmly established that carbon dioxide is an important factor in causing climatic change’ (see Chapter9 ).

In trying to understand the carbon cycle, and specifically how fossil fuel emissions would change atmospheric CO2,the interdisciplinary field of carbon cycle science began. One of the first problems addressed was the atmosphere-ocean exchange of CO2 .Revelle and Suess 1957 [JoC] ) explained why part of the emitted CO2 was observed to accumulate in the atmosphere rather than being completely absorbed by the oceans. While CO2 can be mixed rapidly into the upper layers of the ocean, the time to mix with the deep ocean is many centuries. By the time of the TAR, the interaction of climate change with the oceanic circulation and biogeochemistry was projected to reduce the fraction of anthropogenic CO2 emissions taken up by the oceans in the future, leaving a greater fraction in the atmosphere (Sections 7.1 , 7.3 and 10.4 ).

In the 1950 s, the greenhouse gases of concern remained CO2 andH2O, the same two identified by Tyndall a century earlier. It was not until the 1970 s that other greenhouse gases – CH4,N2Oand CFCs – were widely recognised as important anthropogenic greenhouse gases ( Ramanathan, 1975 [JoC, ARC] ; Wang et al., 1976 [JoC] ; Section 2.3). By the 1970 s, the importance of aerosol-cloud effects in reflecting sunlight was known ( Twomey, 1977 [JoC] ), and atmospheric aerosols (suspended small particles) were being proposed as climate-forcing constituents. Charlson and others (summarised in Charlson et al., 1990 [JoC] ) built a consensus that sulphate aerosols were, by themselves, cooling the Earth’s surface by directly reflecting sunlight. Moreover, the increases in sulphate aerosols were anthropogenic and linked with the main source of CO2,burning of fossil fuels( Section 2.4 ). Thus, the current picture of the atmospheric constituents driving climate change contains a much more diverse mix of greenhouse agents.

1.4.2 Past Climate Observations, Astronomical Theory and Abrupt Climate Changes

Throughout the 19th and 20th centuries, a wide range of geomorphology and palaeontology studies has provided new insight into the Earth’s past climates, covering periods of hundreds of millions of years. The Palaeozoic Era, beginning 600 Ma, displayed evidence of both warmer and colder climatic conditions than the present; the Tertiary Period (65 to 2.6 Ma) was generally warmer; and the Quaternary Period (2.6 Ma to the present – the ice ages) showed oscillations between glacial and interglacial conditions. Louis Agassiz 1837 [NPR] ) developed the hypothesis that Europe had experienced past glacial ages, and there has since been a growing awareness that long-term climate observations can advance the understanding of the physical mechanisms affecting climate change. The scientific study of one such mechanism – modifications in the geographical and temporal patterns of solar energy reaching the Earth’s surface due to changes in the Earth’s orbital parameters – has a long history. The pioneering contributions of Milankovitch 1941 [NPR] ) to this astronomical theory of climate change are widely known, and the historical review of Imbrie and Imbrie 1979 [NPR] ) calls attention to much earlier contributions, such as those of James Croll, originating in 1864 .

The pace of palaeoclimatic research has accelerated over recent decades. Quantitative and well-dated records of climate fluctuations over the last 100 kyr have brought a more comprehensive view of how climate changes occur, as well as the means to test elements of the astronomical theory. By the 1950 s, studies of deep-sea cores suggested that the ocean temperatures may have been different during glacial times ( (Emiliani, 1955 ) Ewing and Donn 1956 [JoC] ) proposed that changes in ocean circulation actually could initiate an ice age. In the 1960 s, the works of Emiliani 1969 [JoC] and Shackleton 1967 [JoC] ) showed the potential of isotopic measurements in deep-sea sediments to help explain Quaternary changes. In the 1970 s, it became possible to analyse a deep-sea core time series of more than 700 kyr, thereby using the last reversal of the Earth’s magnetic field to establish a dated chronology. This deep-sea observational record clearly showed the same periodicities found in the astronomical forcing, immediately providing strong support to Milankovitch’s theory ( Hays et al., 1976 [JoC] ).

Ice cores provide key information about past climates, including surface temperatures and atmospheric chemical composition. The bubbles sealed in the ice are the only available samples of these past atmospheres. The first deep ice cores from Vostok in Antarctica ( Barnola et al., 1987 [JoC, ARC] ; Jouzel et al., 1987 [JoC, ARC] , 1993 ) provided additional evidence of the role of astronomical forcing. They also revealed a highly correlated evolution of temperature changes and atmospheric composition, which was subsequently confirmed over the past 400 kyr ( Petit et al., 1999 [JoC] ) and now extends to almost 1 Myr. This discovery drove research to understand the causal links between greenhouse gases and climate change. The same data that confirmed the astronomical theory also revealed its limits: a linear response of the climate system to astronomical forcing could not explain entirely the observed fluctuations of rapid ice-age terminations preceded by longer cycles of glaciations.

The importance of other sources of climate variability was heightened by the discovery of abrupt climate changes. In this context, ‘abrupt’ designates regional events of large amplitude, typically a few degrees celsius, which occurred within several decades – much shorter than the thousand-year time scales that characterise changes in astronomical forcing. Abrupt temperature changes were first revealed by the analysis of deep ice cores from Greenland ( Dansgaard et al., 1984 [NPR] Oeschger et al. 1984 [NPR] ) recognised that the abrupt changes during the termination of the last ice age correlated with cooling in Gerzensee (Switzerland) and suggested that regime shifts in the Atlantic Ocean circulation were causing these widespread changes. The synthesis of palaeoclimatic observations by ( Broecker and Denton 1989 ) ) invigorated the community over the next decade. By the end of the 1990 s, it became clear that the abrupt climate changes during the last ice age, particularly in the North Atlantic regions as found in the Greenland ice cores, were numerous ( Dansgaard et al., 1993 [JoC] ), indeed abrupt ( Alley et al., 1993 [JoC, ARC] ) and of large amplitude ( Severinghaus and Brook, 1999 [JoC] ). They are now referred to as Dansgaard-Oeschger events. A similar variability is seen in the North Atlantic Ocean, with north-south oscillations of the polar front ( Bond et al., 1992 [JoC] ) and associated changes in ocean temperature and salinity ( Cortijo et al., 1999 [JoC, ARC] ). With no obvious external forcing, these changes are thought to be manifestations of the internal variability of the climate system.

The importance of internal variability and processes was reinforced in the early 1990 s with analysis of records with high temporal resolution. New ice cores (Greenland Ice Core Project, Johnsen et al., 1992 [JoC] ; Greenland Ice Sheet Project 2, Grootes et al., 1993 [JoC] ), new ocean cores from regions with high sedimentation rates, as well as lacustrine sediments and cave stalagmites produced additional evidence for unforced climate changes, and revealed a large number of abrupt changes in many regions throughout the last glacial cycle. Long sediment cores from the deep ocean were used to reconstruct the thermohaline circulation connecting deep and surface waters ( Bond et al., 1992 [JoC] ; Broecker, 1997 [JoC] ) and to demonstrate the participation of the ocean in these abrupt climate changes during glacial periods.

By the end of the 1990 s, palaeoclimate proxies for a range of climate observations had expanded greatly. The analysis of deep corals provided indicators for nutrient content and mass exchange from the surface to deep water ( Adkins et al., 1998 [JoC] ), showing abrupt variations characterised by synchronous changes in surface and deep-water properties ( Shackleton et al., 2000 [JoC] ). Precise measurements of the CH4 abundances (a global quantity) in polar ice cores showed that they changed in concert with the Dansgaard-Oeschger events and thus allowed for synchronisation of the dating across ice cores ( Blunier et al., 1998 [JoC] ). The characteristics of the antarctic temperature variations and their relation to the Dansgaard-Oeschger events in Greenland were consistent with the simple concept of a bipolar seesaw caused by changes in the thermohaline circulation of the Atlantic Ocean ( Stocker, 1998 [JoC, SRC] ). This work underlined the role of the ocean in transmitting the signals of abrupt climate change.

Abrupt changes are often regional, for example, severe droughts lasting for many years have changed civilizations, and have occurred during the last 10 kyr of stable warm climate (deMenocal, 2001 ). This result has altered the notion of a stable climate during warm epochs, as previously suggested by the polar ice cores. The emerging picture of an unstable ocean-atmosphere system has opened the debate of whether human interference through greenhouse gases and aerosols could trigger such events ( Broecker, 1997 [JoC] ).

Palaeoclimate reconstructions cited in the FAR were based on various data, including pollen records, insect and animal remains, oxygen isotopes and other geological data from lake varves, loess, ocean sediments, ice cores and glacier termini. These records provided estimates of climate variability on time scales up to millions of years. A climate proxy is a local quantitative record (e.g., thickness and chemical properties of tree rings, pollen of different species) that is interpreted as a climate variable (e.g., temperature or rainfall) using a transfer function that is based on physical principles and recently observed correlations between the two records. The combination of instrumental and proxy data began in the 1960 s with the investigation of the influence of climate on the proxy data, including tree rings ( Fritts, 1962 [JoC] ), corals ( Weber and Woodhead, 1972 [JoC] ; Dunbar and Wellington, 1981 [JoC] ) and ice cores ( Dansgaard et al., 1984 [NPR] ; Jouzel et al., 1987 [JoC, ARC] ). Phenological and historical data (e.g., blossoming dates, harvest dates, grain prices, ships’ logs, newspapers, weather diaries, ancient manuscripts) are also a valuable source of climatic reconstruction for the period before instrumental records became available. Such documentary data also need calibration against instrumental data to extend and reconstruct the instrumental record ( Lamb, 1969 [JoC] ;( Zhu, 1973; ) van den Dool, 1978 [JoC] ; Brazdil, 1992 [NPR, MoS] ; Pfister, 1992 [NPR, MoS, SRC] ). With the development of multi-proxy reconstructions, the climate data were extended not only from local to global, but also from instrumental data to patterns of climate variability ( (Wanner et al., 1995; ) Mann et al., 1998 [PoC, JoC, MoS] ; Luterbacher et al., 1999 [JoC, SRC] ). Most of these reconstructions were at single sites and only loose efforts had been made to consolidate records. Mann et al. 1998 [PoC, JoC, MoS] ) made a notable advance in the use of proxy data by ensuring that the dating of different records lined up. Thus, the true spatial patterns of temperature variability and change could be derived, and estimates of NH average surface temperatures were obtained.

The Working Group I (WGI) WGI FAR noted that past climates could provide analogues. Fifteen years of research since that assessment has identified a range of variations and instabilities in the climate system that occurred during the last 2 Myr of glacial-interglacial cycles and in the super-warm period of 50 Ma. These past climates do not appear to be analogues of the immediate future, yet they do reveal a wide range of climate processes that need to be understood when projecting 21st-century climate change (see Chapter6 ).

1.4.3 Solar Variability and the Total Solar Irradiance

Measurement of the absolute value of total solar irradiance (TSI) is difficult from the Earth’s surface because of the need to correct for the influence of the atmosphere. Langley 1884 [NPR] ) attempted to minimise the atmospheric effects by taking measurements from high on Mt. Whitney in California, and to estimate the correction for atmospheric effects by taking measurements at several times of day, for example, with the solar radiation having passed through different atmospheric pathlengths. Between 1902 and 1957, Charles Abbot and a number of other scientists around the globe made thousands of measurements of TSI from mountain sites. Values ranged from 1,322 to 1,465 Wm–2,which encompasses the current estimate of 1,365 Wm–2 .Foukal et al. 1977 [ARC] ) deduced from Abbot’s daily observations that higher values of TSI were associated with more solar faculae (e.g., Abbot, 1910 [NPR] ).

In 1978, the Nimbus-7 satellite was launched with a cavity radiometer and provided evidence of variations in TSI ( Hickey et al., 1980 [JoC] ). Additional observations were made with an active cavity radiometer on the Solar Maximum Mission, launched in 1980 ( Willson et al., 1980 [JoC] ). Both of these missions showed that the passage of sunspots and faculae across the Sun’s disk influenced TSI. At the maximum of the 11-year solar activity cycle, the TSI is larger by about 0.1% than at the minimum. The observation that TSI is highest when sunspots are at their maximum is the opposite of Langley’s ( 1876 ) hypothesis.

As early as 1910, Abbot believed that he had detected a downward trend in TSI that coincided with a general cooling of climate. The solar cycle variation in irradiance corresponds to an 11-year cycle in radiative forcing which varies by about 0.2 Wm–2.There is increasingly reliable evidence of its influence on atmospheric temperatures and circulations, particularly in the higher atmosphere ( Reid, 1991 [JoC, ARC] ; Brasseur, 1993 [JoC, MoS, ARC] ; Balachandran and Rind, 1995 [JoC, MoS, ARC] ; Haigh, 1996 [JoC, SRC] ;( Labitzke and van Loon, 1997; ) ( van Loon and Labitzke, 2000 ) ). Calculations with three-dimensional models ( Wetherald and Manabe, 1975 [JoC, MoS] ; Cubasch et al., 1997 [JoC, MoS, SRC] ; Lean and Rind, 1998 [JoC, MoS, ARC] ; Tett et al., 1999 [JoC, ARC] ; Cubasch and Voss, 2000 [SRC] ) suggest that the changes in solar radiation could cause surface temperature changes of the order of a few tenths of a degree celsius.

For the time before satellite measurements became available, the solar radiation variations can be inferred from cosmogenic isotopes(10Be, 14C) and from the sunspot number. Naked-eye observations of sunspots date back to ancient times, but it was only after the invention of the telescope in 1607 that it became possible to routinely monitor the number, size and position of these ‘stains’ on the surface of the Sun. Throughout the 17th and 18th centuries, numerous observers noted the variable concentrations and ephemeral nature of sunspots, but very few sightings were reported between 1672 and 1699 (for an overview see Hoyt et al., 1994 [JoC] ). This period of low solar activity, now known as the Maunder Minimum, occurred during the climate period now commonly referred to as the Little Ice Age ( Eddy, 1976 [JoC] ). There is no exact agreement as to which dates mark the beginning and end of the Little Ice Age, but from about 1350 to about 1850 is one reasonable estimate.

During the latter part of the 18th century, Wilhelm ( Herschel 1801 ) ) noted the presence not only of sunspots but of bright patches, now referred to as faculae, and of granulations on the solar surface. He believed that when these indicators of activity were more numerous, solar emissions of light and heat were greater and could affect the weather on Earth. Heinrich ( Schwabe 1844 ) ) published his discovery of a ‘10-year cycle’ in sunspot numbers. Samuel ( Langley 1876 ) ) compared the brightness of sunspots with that of the surrounding photosphere. He concluded that they would block the emission of radiation and estimated that at sunspot cycle maximum the Sun would be about 0.1% less bright than at the minimum of the cycle, and that the Earth would be 0.1°C to 0.3°C cooler.

These satellite data have been used in combination with the historically recorded sunspot number, records of cosmogenic isotopes, and the characteristics of other Sun-like stars to estimate the solar radiation over the last 1,000 years ( Eddy, 1976 [JoC] ; Hoyt and Schatten, 1993 [JoC] , 1997; Lean et al., 1995 [PoC, JoC, ARC] ; Lean, 1997 [ARC] ). These data sets indicated quasi-periodic changes in solar radiation of 0.24 to 0.30% on the centennial time scale. These values have recently been re-assessed (see, e.g., Chapter2 ).

The TAR states that the changes in solar irradiance are not the major cause of the temperature changes in the second half of the 20th century unless those changes can induce unknown large feedbacks in the climate system. The effects of galactic cosmic rays on the atmosphere (via cloud nucleation) and those due to shifts in the solar spectrum towards the ultraviolet (UV) range, at times of high solar activity, are largely unknown. The latter may produce changes in tropospheric circulation via changes in static stability resulting from the interaction of the increased UV radiation with stratospheric ozone. More research to investigate the effects of solar behaviour on climate is needed before the magnitude of solar effects on climate can be stated with certainty.

1.4.4 Biogeochemistry and Radiative Forcing

The modern scientific understanding of the complex and interconnected roles of greenhouse gases and aerosols in climate change has undergone rapid evolution over the last two decades. While the concepts were recognised and outlined in the 1970 s (see Sections 1.3.1 and 1.4.1 ), the publication of generally accepted quantitative results coincides with, and was driven in part by, the questions asked by the IPCC beginning in 1988 . Thus, it is instructive to view the evolution of this topic as it has been treated in the successive IPCC reports.

The WGI FAR codified the key physical and biogeochemical processes in the Earth system that relate a changing climate to atmospheric composition, chemistry, the carbon cycle and natural ecosystems. The science of the time, as summarised in the FAR, made a clear case for anthropogenic interference with the climate system. In terms of greenhouse agents, the main conclusions from the WGI FAR Policymakers Summary are still valid today: (1) ‘emissions resulting from human activities are substantially increasing the atmospheric concentrations of the greenhouse gases: CO2,CH4,CFCs,N2O’; (2) ‘some gases are potentially more effective (at greenhouse warming)’; (3) feedbacks between the carbon cycle, ecosystems and atmospheric greenhouse gases in a warmer world will affect CO2 abundances; and (4) GWPs provide a metric for comparing the climatic impact of different greenhouse gases, one that integrates both the radiative influence and biogeochemical cycles. The climatic importance of tropospheric ozone, sulphate aerosols and atmospheric chemical feedbacks were proposed by scientists at the time and noted in the assessment. For example, early global chemical modelling results argued that global tropospheric ozone, a greenhouse gas, was controlled by emissions of the highly reactive gases nitrogen oxides (NOx), carbon monoxide (CO) and non-methane hydrocarbons (NMHC, also known as volatile organic compounds, VOC). In terms of sulphate aerosols, both the direct radiative effects and the indirect effects on clouds were acknowledged, but the importance of carbonaceous aerosols from fossil fuel and biomass combustion was not recognised (Chapters 2 , 7 and 10 ).

The concept of radiative forcing (RF) as the radiative imbalance (Wm–2)in the climate system at the top of the atmosphere caused by the addition of a greenhouse gas (or other change) was established at the time and summarised in Chapter2 of the WGI FAR. Agents of RF included the direct greenhouse gases, solar radiation, aerosols and the Earth’s surface albedo. What was new and only briefly mentioned was that ‘many gases produce indirect effects on the global radiative forcing’. The innovative global modelling work of Derwent 1990 [NPR] ) showed that emissions of the reactive but non-greenhouse gases – NOx,CO and NMHCs – altered atmospheric chemistry and thus changed the abundance of other greenhouse gases. Indirect GWPs for NOx,CO and VOCs were proposed. The projected chemical feedbacks were limited to short-lived increases in tropospheric ozone. By 1990, it was clear that the RF from tropospheric ozone had increased over the 20th century and stratospheric ozone had decreased since 1980 (e.g., Lacis et al., 1990 [JoC, MoS] ), but the associated RFs were not evaluated in the assessments. Neither was the effect of anthropogenic sulphate aerosols, except to note in the FAR that ‘it is conceivable that this radiative forcing has been of a comparable magnitude, but of opposite sign, to the greenhouse forcing earlier in the century’. Reflecting in general the community’s concerns about this relatively new measure of climate forcing, RF bar charts appear only in the underlying FAR chapters, but not in the FAR Summary. Only the long-lived greenhouse gases are shown, although sulphate aerosols direct effect in the future is noted with a question mark (i.e., dependent on future emissions) (Chapters 2 , 7 and 10 ).

The cases for more complex chemical and aerosol effects were becoming clear, but the scientific community was unable at the time to reach general agreement on the existence, scale and magnitude of these indirect effects. Nevertheless, these early discoveries drove the research agendas in the early 1990 s. The widespread development and application of global chemistry-transport models had just begun with international workshops ( Pyle et al., 1996 [NPR, MoS] ; Jacob et al., 1997 [JoC, MoS, ARC] ; Rasch, 2000 [JoC, MoS] ). In the Supplementary Report ( IPCC, 1992 [NPR] ) to the FAR, the indirect chemical effects of CO, NOx and VOC were reaffirmed, and the feedback effect of CH4 on the tropospheric hydroxyl radical (OH) was noted, but the indirect RF values from the FAR were retracted and denoted in a table with ‘+’, ‘0’ or ‘–’. Aerosol-climate interactions still focused on sulphates, and the assessment of their direct RF for the NH (i.e., a cooling) was now somewhat quantitative as compared to the FAR. Stratospheric ozone depletion was noted as causing a significant and negative RF, but not quantified. Ecosystems research at this time was identifying the responses to climate change and CO2 increases, as well as altered CH4 andN2Ofluxes from natural systems; however, in terms of a community assessment it remained qualitative.

By 1994, with work on SAR progressing, the Special Report on Radiative Forcing ( IPCC, 1995 [NPR, MoS] ) reported significant breakthroughs in a set of chapters limited to assessment of the carbon cycle, atmospheric chemistry, aerosols and RF. The carbon budget for the 1980 s was analysed not only from bottom-up emissions estimates, but also from a top-down approach including carbon isotopes. A first carbon cycle assessment was performed through an international model and analysis workshop examining terrestrial and oceanic uptake to better quantify the relationship between CO2 emissions and the resulting increase in atmospheric abundance. Similarly, expanded analyses of the global budgets of trace gases and aerosols from both natural and anthropogenic sources highlighted the rapid expansion of biogeochemical research. The first RF bar chart appears, comparing all the major components of RF change from the pre-industrial period to the present. Anthropogenic soot aerosol, with a positive RF, was not in the 1995 Special Report but was added to the SAR. In terms of atmospheric chemistry, the first open-invitation modelling study for the IPCC recruited 21 atmospheric chemistry models to participate in a controlled study of photochemistry and chemical feedbacks. These studies (e.g., Olson et al., 1997 [JoC, MoS] ) demonstrated a robust consensus about some indirect effects, such as the CH4 impact on atmospheric chemistry, but great uncertainty about others, such as the prediction of tropospheric ozone changes. The model studies plus the theory of chemical feedbacks in the CH4-CO-OH system ( Prather, 1994 [JoC, SRC] ) firmly established that the atmospheric lifetime of a perturbation (and hence climate impact and GWP) of CH4 emissions was about 50% greater than reported in the FAR. There was still no consensus on quantifying the past or future changes in tropospheric ozone or OH (the primary sink for CH4)(Chapters 2 , 7 and 10 ).

In the early 1990 s, research on aerosols as climate forcing agents expanded. Based on new research, the range of climate-relevant aerosols was extended for the first time beyond sulphates to include nitrates, organics, soot, mineral dust and sea salt. Quantitative estimates of sulphate aerosol indirect effects on cloud properties and hence RF were sufficiently well established to be included in assessments, and carbonaceous aerosols from biomass burning were recognised as being comparable in importance to sulphate ( Penner et al., 1992 [JoC, SRC] ). Ranges are given in the special report ( IPCC, 1995 [NPR, MoS] ) for direct sulphate RF (–0.25 to –0.9 Wm–2)and biomass-burning aerosols (–0.05 to –0.6 Wm–2). The aerosol indirect RF was estimated to be about equal to the direct RF, but with larger uncertainty. The injection of stratospheric aerosols from the eruption of Mt. Pinatubo was noted as the first modern test of a known radiative forcing, and indeed one climate model accurately predicted the temperature response ( Hansen et al., 1992 [JoC, ARC] ). In the one-year interval between the special report and the SAR, the scientific understanding of aerosols grew. The direct anthropogenic aerosol forcing (from sulphate, fossil-fuel soot and biomass-burning aerosols) was reduced to –0.5 Wm–2.The RF bar chart was now broken into aerosol components (sulphate, fossil-fuel soot and biomass burning aerosols) with a separate range for indirect effects (Chapters 2 and 7 ;Sections 8.2 and 9.2 ).

Throughout the 1990 s, there were concerted research programs in the USA and EU to evaluate the global environmental impacts of aviation. Several national assessments culminated in the IPCC Special Report on Aviation and the Global Atmosphere ( IPCC, 1999 [NPR] ), which assessed the impacts on climate and global air quality. An open invitation for atmospheric model participation resulted in community participation and a consensus on many of the environmental impacts of aviation (e.g., the increase in tropospheric ozone and decrease in CH4 due to NOx emissions were quantified). The direct RF of sulphate and of soot aerosols was likewise quantified along with that of contrails, but the impact on cirrus clouds that are sometimes generated downwind of contrails was not. The assessment re-affirmed that RF was a first-order metric for the global mean surface temperature response, but noted that it was inadequate for regional climate change, especially in view of the largely regional forcing from aerosols and tropospheric ozone (Sections 2.6 , 2.8 and 10.2 ).

By the end of the 1990 s, research on atmospheric composition and climate forcing had made many important advances. The TAR was able to provide a more quantitative evaluation in some areas. For example, a large, open-invitation modelling workshop was held for both aerosols (11 global models) and tropospheric ozone-OH chemistry (14 global models). This workshop brought together as collaborating authors most of the international scientific community involved in developing and testing global models of atmospheric composition. In terms of atmospheric chemistry, a strong consensus was reached for the first time that science could predict the changes in tropospheric ozone in response to scenarios for CH4 and the indirect greenhouse gases (CO, NOx,VOC) and that a quantitative GWP for CO could be reported. Further, combining these models with observational analysis, an estimate of the change in tropospheric ozone since the pre-industrial era – with uncertainties – was reported. The aerosol workshop made similar advances in evaluating the impact of different aerosol types. There were many different representations of uncertainty (e.g., a range in models versus an expert judgment) in the TAR, and the consensus RF bar chart did not generate a total RF or uncertainties for use in the subsequent IPCC Synthesis Report ( IPCC, 2001b [NPR] ) (Chapters 2 and 7 ; Section 9.2 ).

1.4.5 Cryospheric Topics

The cryosphere, which includes the ice sheets of Greenland and Antarctica, continental (including tropical) glaciers, snow, sea ice, river and lake ice, permafrost and seasonally frozen ground, is an important component of the climate system. The cryosphere derives its importance to the climate system from a variety of effects, including its high reflectivity (albedo) for solar radiation, its low thermal conductivity, its large thermal inertia, its potential for affecting ocean circulation (through exchange of freshwater and heat) and atmospheric circulation (through topographic changes), its large potential for affecting sea level (through growth and melt of land ice), and its potential for affecting greenhouse gases (through changes in permafrost)( Chapter4 ).

Studies of the cryospheric albedo feedback have a long history. The albedo is the fraction of solar energy reflected back to space. Over snow and ice, the albedo (about 0.7 to 0.9) is large compared to that over the oceans (<0.1). In a warming climate, it is anticipated that the cryosphere would shrink, the Earth’s overall albedo would decrease and more solar energy would be absorbed to warm the Earth still further. This powerful feedback loop was recognised in the 19th century by Croll 1890 [NPR] ) and was first introduced in climate models by Budyko 1969 [JoC] and Sellers 1969 [JoC, MoS] ). But although the principle of the albedo feedback is simple, a quantitative understanding of the effect is still far from complete. For instance, it is not clear whether this mechanism is the main reason for the high-latitude amplification of the warming signal.

The potential cryospheric impact on ocean circulation and sea level are of particular importance. There may be ‘large-scale discontinuities’ ( IPCC, 2001a [NPR] ) resulting from both the shutdown of the large-scale meridional circulation of the world oceans (see Section 1.4.6 )and the disintegration of large continental ice sheets. ( Mercer 1968, ) 1978 ) proposed that atmospheric warming could cause the ice shelves of western Antarctica to disintegrate and that as a consequence the entire West Antarctic Ice Sheet (10% of the antarctic ice volume) would lose its land connection and come afloat, causing a sea level rise of about five metres.

The importance of permafrost-climate feedbacks came to be realised widely only in the 1990 s, starting with the works of ( Kvenvolden 1988, ) 1993 MacDonald 1990 [JoC, MoS] and Harriss et al. 1993 [NPR] ). As permafrost thaws due to a warmer climate, CO2 and CH4 trapped in permafrost are released to the atmosphere. Since CO2 and CH4 are greenhouse gases, atmospheric temperature is likely to increase in turn, resulting in a feedback loop with more permafrost thawing. The permafrost and seasonally thawed soil layers at high latitudes contain a significant amount (about one-quarter) of the global total amount of soil carbon. Because global warming signals are amplified in high-latitude regions, the potential for permafrost thawing and consequent greenhouse gas releases is thus large.

In situ monitoring of the cryosphere has a long tradition. For instance, it is important for fisheries and agriculture. Seagoing communities have documented sea ice extent for centuries. Records of thaw and freeze dates for lake and river ice start with Lake Suwa in Japan in 1444, and extensive records of snowfall in China were made during the Qing Dynasty ( 1644 – 1912 ). Records of glacial length go back to the mid- 1500 s. Internationally coordinated, long-term glacier observations started in 1894 with the establishment of the International Glacier Commission in Zurich, Switzerland. The longest time series of a glacial mass balance was started in 1946 at the Storglaciären in northern Sweden, followed by Storbreen in Norway (begun in 1949 ). Today a global network of mass balance monitoring for some 60 glaciers is coordinated through the World Glacier Monitoring Service. Systematic measurements of permafrost (thermal state and active layer) began in earnest around 1950 and were coordinated under the Global Terrestrial Network for Permafrost.

The main climate variables of the cryosphere (extent, albedo, topography and mass) are in principle observable from space, given proper calibration and validation through in situ observing efforts. Indeed, satellite data are required in order to have full global coverage. The polar-orbiting Nimbus 5 satellite, launched in 1972, yielded the earliest all-weather, all-season imagery of global sea ice, using microwave instruments ( Parkinson et al., 1987 [NPR] ), and enabled a major advance in the scientific understanding of the dynamics of the cryosphere. Launched in 1978, the Television Infrared Observation Satellite (TIROS-N) yielded the first monitoring from space of snow on land surfaces ( (Dozier et al., 1981 ) ). The number of cryospheric elements now routinely monitored from space is growing, and current satellites are now addressing one of the more challenging elements, variability of ice volume.

Climate modelling results have pointed to high-latitude regions as areas of particular importance and ecological vulnerability to global climate change. It might seem logical to expect that the cryosphere overall would shrink in a warming climate or expand in a cooling climate. However, potential changes in precipitation, for instance due to an altered hydrological cycle, may counter this effect both regionally and globally. By the time of the TAR, several climate models incorporated physically based treatments of ice dynamics, although the land ice processes were only rudimentary. Improving representation of the cryosphere in climate models is still an area of intense research and continuing progress( Chapter8 ).

1.4.6 Ocean and Coupled Ocean-Atmosphere Dynamics

Developments in the understanding of the oceanic and atmospheric circulations, as well as their interactions, constitute a striking example of the continuous interplay among theory, observations and, more recently, model simulations. The atmosphere and ocean surface circulations were observed and analysed globally as early as the 16th and 17th centuries, in close association with the development of worldwide trade based on sailing. These efforts led to a number of important conceptual and theoretical works. For example, Edmund Halley first published a description of the tropical atmospheric cells in 1686, and George Hadley proposed a theory linking the existence of the trade winds with those cells in 1735 . These early studies helped to forge concepts that are still useful in analysing and understanding both the atmospheric general circulation itself and model simulations ( Lorenz, 1967 [NPR] ; Holton, 1992 [NPR] ).